Ontology is generally considered the study of what exists. It’s a philosophical area of study because there is no clear cut answer as to what exists. Most agree that trees exist. Does the number “three” exist?

The point of this section is not to argue that the current theory will provide the answer to what exists and what doesn’t. The point of this section is to lay out how this theory will use the terms “exists” and “real”, and to explain how certain fundamental concepts like “causality” and “information” relate to these terms.

Process

The ontology considered here is probably closest to what is called a process ontology. As Philip Goff is fond of saying, physics cannot tell us what things are in themselves, it only tells us what things do, which is to say, how they interact with other things. This doesn’t mean there are no “things” there. It means we can only know about those things by what they do.

Constructor Theory provides a rigorous framework to describe a process (which Constructor Theory calls a task):

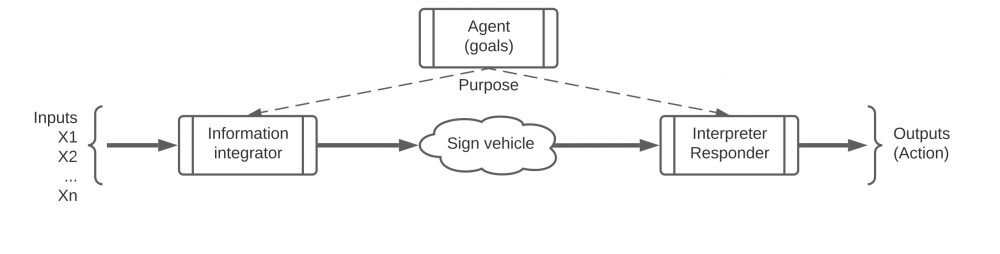

Input (x1, x2, … xn) –>[constructor/mechanism/system]–> Output (y1, y2, …ym)

So in the Psychule framework, something exists if it is a mechanism for at least one process. If it doesn’t interact with the environment we cannot ever know about it and would have no reason to care about it.

Patterns

Our axioms say patterns are real. The best explanation of what this axiom is trying to say is probably given by Daniel Dennett’s paper “Real Patterns“. The bottom line is that patterns are real abstract things. This concept is useful to us because our framework relies heavily on patterns. The specific set of inputs to a task constitutes a pattern. The combination of input, mechanism, and output for a given task constitutes a pattern. The set of all input/output combinations for a given mechanism constitutes a pattern. In fact, for anything we identify as existing, we do so by recognizing a set of input/output combinations for that thing, i.e., the patterns associated with that thing, although sometimes recognizing the outputs alone are enough, if those outputs are unique to that thing.

Causality

Causality brings in the topic of our third axiom: things change. Causality is specifically about how things change over time. More specifically, causality is about patterns in how things change.

To my knowledge, causality was most famously addressed by Aristotle and his Four Causes. Over time, causal analysis got whittled down to just cause and effect. I think this whittling was unfortunate because Aristotle’s analysis was accurate, more descriptive, and therefor more useful. Aristotle recognized four causes: the material, efficient, formal, and final. However, instead of calling these “causes”, I think they would be better described as aspects of causation.

His paradigm case was the creation of a sculpture. The material cause was the material from which the sculpture was made, say, clay. The efficient cause was the sculptor. The formal cause was the result, say, a statue of a man. The final cause was the reason the sculpture was made, say, as a contract from the city.

It should be quickly obvious how Aristotle’s framework applies to the current framework. The material cause is the input, the efficient cause is the mechanism, and the formal cause is the output.

Input (material) –>[efficient]–> Output (formal).

The final cause will be addressed in the upcoming section on purpose/function.

The current framework describes causality as follows: a given mechanism causes an output when presented with the input. I should point out that causality as described here is relative. You could just as easily reverse the role of the input and the mechanism, saying a given input, when presented with the mechanism, causes the output. That’s why, I presume, in the standard discussion of “cause” and “effect”, the input and mechanism are lumped together as “the cause”. But breaking them out let’s you focus on one (think of the many different things a given artist could make) or the other (think of the many things different artists could make from a given lump of clay).

Information

There’s another aspect of causality which is important. It follows from any system which at least seems to be governed by rules (like physical laws) that every interaction generates correlation. In the absence of any interactions outside the given system, output is correlated with both the input and the mechanism which created it. Also, if there is more than one output, those outputs may be correlated w/ each other. This correlation is the essence of information. At the level of quantum physics, this property is referred to as entanglement. In Information Theory this property is referred to as mutual information. This mutual information is a physically derived relation between physical systems, and therefore is mind independent. But also note that the physical properties of an isolated system say nothing about its correlations.

Note that there is a value for the mutual information between any two physical systems. So there is a value for the mutual information (MI) between systems A and B, and a different value for the MI between that same system A and system C, and so on. There is a value for the MI (mutual information) between A and every other conceivable system. So where does that leave us? Not all MI values are equal. For example, the MI between smoke and a fire is fairly high, whereas the MI between smoke and a rabbit is lower [but not necessarily insignificant, if there is a rabbit cooking over the fire]. But the MI between smoke and the credit card in my wallet is almost certainly negligible [but not zero!].

To restate: we said a mechanism causes the output when presented with the input. We can also say that a mechanism causes the output to be correlated with both the input and the mechanism. Thus, a mechanism causes information.

Computation

Much heat (and not so much light) occurs in discussions of the relation between consciousness and computers. Here I simply want to establish the ontology of computation with regard to the Psychule framework.

Computer theory has established that all computation can be reduced to some combination of four basic operations: COPY, AND, OR, and NOT. There are other operations, such as EXCLUSIVE OR (XOR), which means something like “x OR y, but not both”, but operations such as XOR are can be organized by combinations of the first four.

[Actually, you can build all computation from just two of these operations: NOT and AND. For example COPY can be made from 2 NOT’s (your basic double negative). Similarly, “a OR b” is equivalent to “NOT ( (NOT a) AND (NOT b) )”. This is why you can build any computer system w/ just NAND gates.]

So what is the significance of computation in the Psychule framework? Computation processes mutual information. Assume x is a physical system that has some mutual information with respect to y. After the operation x COPY, the output of that operation, say z, now has (approx.) the same mutual information with respect to y. In fact, we can define COPY as any operation for which the output retains the same mutual information as the input. Likewise, the NOT operation produces output that has an inverse correlation with respect to the mutual information of the input. The AND and OR operations similarly produce outputs with mutual information relative to the inputs, but the mutual information of separate inputs is combined into the output.

Bottom line: information and computation can be physically described without reference to meaning, intentions, or minds. But then, where do these things (meaning, intentions, minds) come from? Mutual information is an affordance for these things. If x has mutual information with respect to y, and some system “cares” about y such that it “wants” to do a given action when y is the case, then that system can achieve that “goal” by attaching the appropriate action to x.

So where do cares, wants, and goals come from? Read on.